Azure Cosmos DB is horizontally partitioned, globally distributed, multi-model and No SQL database service. Any application that needs to handle massive amounts of varied data, reads and writes at a globally distributed scale with near-real time response are great use-cases for Cosmos DB. It is a non-relational database designed to store documents that have no fixed structure and uses JavaScript Object Notation (JSON) as the format for structuring the data. The benefit is that it is lightweight and easily modifiable, and you are not bound by any rigid schema.

To cop up with emerging technology changes, applications are required to handle different variety and volume of data and needs to be highly responsive. SQL server database is not able to handle these features and that’s where the need for migrating data from SQL server to Cosmos DB arises.

In this article, we will see how to migrate data from SQL server to Azure Cosmos DB using Data Migration Tool.

DATA MIGRATION TOOL

Data Migration tool can import data from variety of sources into Azure Cosmos DB collections and tables. It can import SQL, MongoDB, Azure Table storage, JSON files, CSV files and even Azure Cosmos DB SQL API collections. Data migration tool includes:

- dtui.exe – Graphical User Interface.

- dt.exe – Command Line Interface.

This tool can be downloaded from here.

For this article, we are going to import SQL server data to Cosmos DB using Data Migration tool GUI.

IDENTIFY THE DATA

The first and the most important step for every data migration process are to identify the data that needs to be migrated. The main point to be considered while identifying the data to import from SQL Server to Cosmos DB is the data require some sort of transformation as it needs to bridge the gap between the normalized data in SQL Server and its de-normalized representation in JSON.

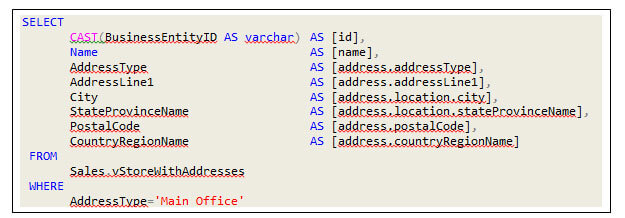

So keeping this in mind query can be constructed as

1.Simple Select Query:

Like select * from < table name > where each row will be transformed into a flat document with each column exported as a simple property in the root.

2.Complex Query:

Like multiple tables joined query, where each row will be transformed into complex JSON document with nested properties.

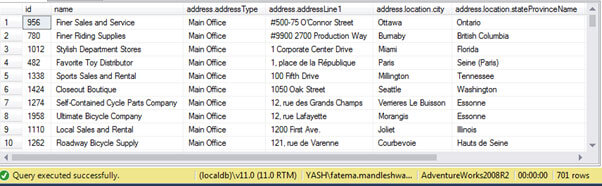

Take an example of complex query, examine each column and define an alias name with dot symbol to infer it in desired nested property. The dotted notation of these column names means nothing to SQL Server but it tells the migration tool how to shape each document being imported.

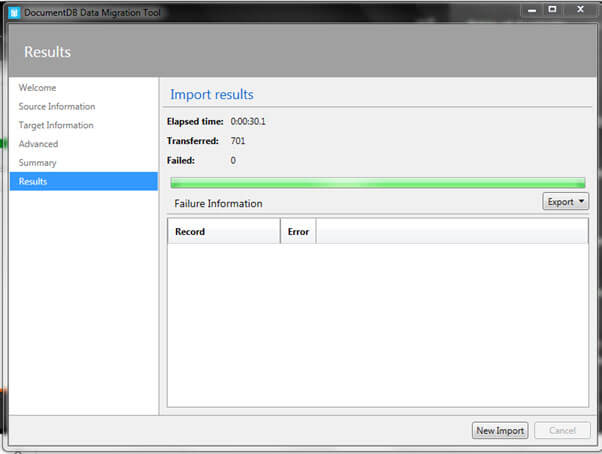

Here in this example the tool will figure out that each document has an ID, name, and address property in its root and an address property as object with nested properties. Also inside the address, there are several address-related properties grouped together. There is another property location, with more nested properties for city and state province name. The main point here to note is each row of the SQL query result will map to self-contained JSON document for Cosmos DB. As this example query results in 701 records in SQL so it will be converted into 701 documents in Cosmos DB.

MIGRATION STEPS

Welcome page will appear then click Next.

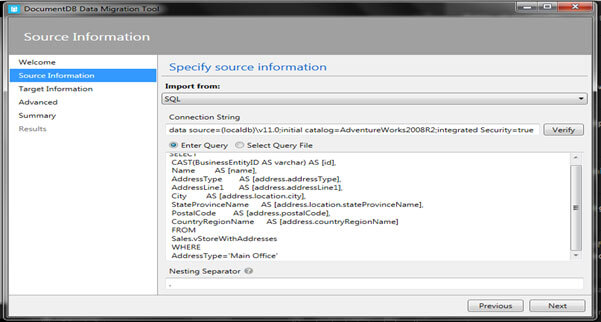

2. Provide Source Information

- Select Import from – SQL

- Provide Connection String of your source SQL database for example – data source=(localdb)\v11.0;initial catalog=AdventureWorks2008R2;integrated Security=true

- Choose between Enter Query or Select Query File. I have Inserted identified SQL Query in the text box.

- Insert Nesting Separator as dot symbol.

- Click Next.

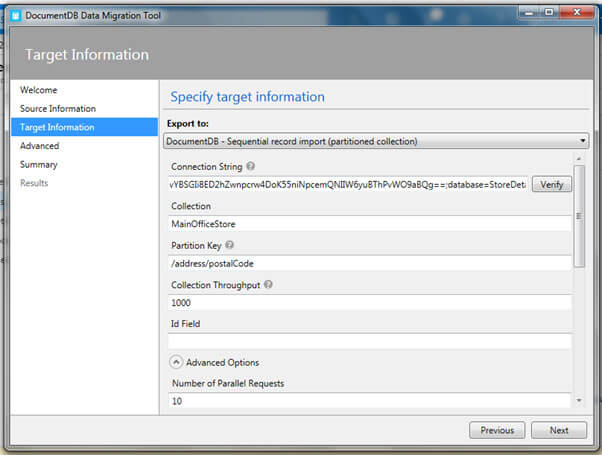

3. Provide Target Information

- Choose Export To

- In this drop down, you have several export options. You can either export to the SQL API in DocumentDB (new name Cosmos DB) or you can generate the JSON files that could subsequently be exported to Cosmos DB.

- In Export to Document DB there are two choices Bulk versus sequential record import. Bulk is generally faster than sequential record but only sequential record import is supported for partition collections.

- So I have chosen DocumentDB – Sequential record import

- Connection String – Provide Cosmos DB Account primary connection string and append it with database name (database=StoreDetails)

If database does not exist, tool will create it automatically. - Collection name – MainOfficeStore

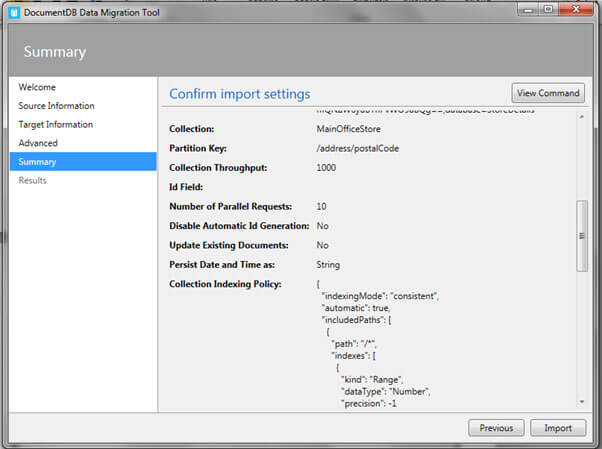

- Partition Key – /address/postalCode (postal code under address property)

- Collection Throughput- 1000 (default Request Unit per second)

- ID – it is optional. If we have some other unique identifier property then ID in our query, it can be mentioned here and if nothing has been provided neither in query nor here then Cosmos will automatically generate GUID as Id. Remember that ID property is always string.

- Go to Advance options and provide Indexing Policy either by entering or providing a file. So select Enter Indexing policy, right click in the textbox we have two options either default or range. I have selected Range here as it helps with range queries and sorting.

- Click Next.

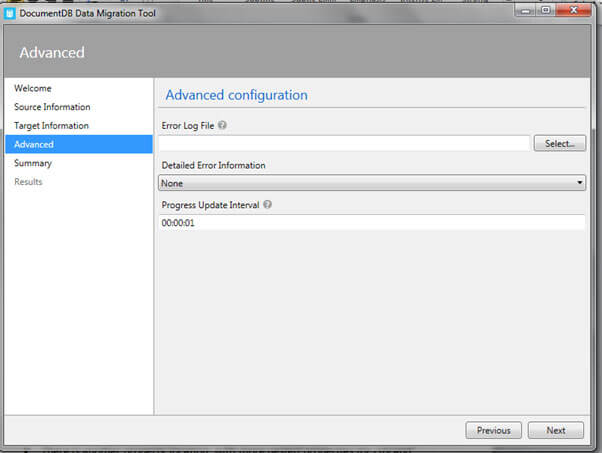

4. Advanced

If you want to generate Error log file, you can mention it or skip this step.

5. Check Summary

Verify the Summary page and click Import.

6. Migration Completed

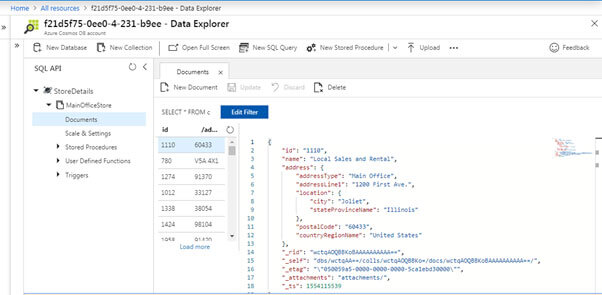

7. Verify in Azure portal

Go to Cosmos DB Account -> Data explorer and click refresh

Here you will see database; collection and documents migrated by the tool.

We have successfully migrated data from SQL Server to Cosmos DB.