Classical Migration Of On-Premises SAP On DB2 To SAP HANA On AWS

Publish Date: June 18, 2021Introduction

In the blog, we will discuss the classical migration of SAP ECC running on DB2 on-premises to SAP HANA on AWS. In the migration process, we have used a Parallel export/import approach to minimize the downtime over the weekend from the Netherlands data center to the AWS US West region located in Oregon.

The customer has been running both Netherland and US entities within a single production system (two different clients). As part of the migration, it was decided to carve out the US Business data from the existing SAP system and set up the SAP landscape running HANA DB in the AWS US region.

Migration and Data Carving

To Protect Data, carve out had to be performed at the source datacenter. A copy of the existing system was built using system copy, whereas the Netherland entity client was deleted from the newly built SAP system.

Since direct connect is not an option with the AWS US region due to privacy issues, the classical migration approach was decided based on where export on newly built SAP system ran on-premise and further imported to SAP system running on AWS.

To achieve lower downtime, parallel export / Import was decided, and in order to transfer export file from on-premises to AWS, sFTP was set up.

Requirements

Source: Made sure required space is available for the export file, and hardware is optimized based on multiple Iterations to maximize the throughput of the export process.

Target: AWS sFTP Service is set up and mapped to AWS S3 Bucket. SAP HANA DB is installed with post-install activities.

For more information about AWS transfer for sFTP, please refer to the below link, https://aws.amazon.com/aws-transfer-family

Target system preparation

AWS Launch wizard for SAP was used to launch AWS instances with the HANA database. This Wizard reduces the time it takes to deploy SAP applications on AWS.

For more information about AWS Launch Wizard, refer to the below link,

https://docs.aws.amazon.com/launchwizard/

Migration Process

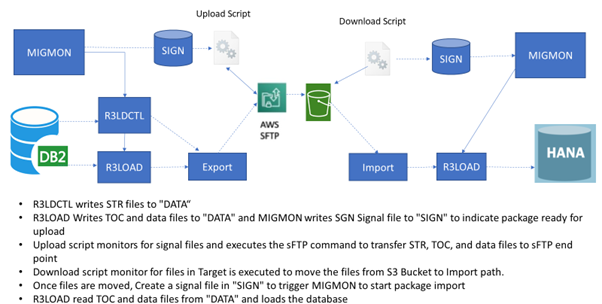

The classic migration export with the parallel export/import option was initiated on the source and target systems using SWPM. An automated OS script on the source operating systems was created to supplement the standard SAP migration process. These scripts control the physical movement of the migration export-related files generated and move to the sFTP, which stores the files on AWS S3 and AWS S3 to target the SAP server.

The upload script is started on the source system and uploads the STR files. It then starts monitoring for signal files created by MIGMON, indicating a package is ready for transfer. When a signal file is detected, the TOC and data files associated with the package get uploaded to the sFTP endpoint.

Once the STR file, TOC, and data files are moved, a custom script to downloaded files from the S3 bucket is used to download the files. When the files are downloaded, it then creates a signal file to indicate MIGMON on the target system that a package is ready to load into the target database.

Post Migration

System parameters are optimized as per the SAP standard, and Automatic backup of the HANA database is scheduled using SAP Backint. AWS Cloudwatch for monitoring is enabled on the SAP instance.

After all the interfaces are established, a Disaster recovery system is set up in US East located in Ohio using Async HANA System replication.

Benefits Realized

- Downtime kept within the anticipated single window for the migration

- IBM DB2 database migrated to SAP HANA.

- Significant improvement in security posture for interface encryption

- Increased compliance and simplified operations.

- Own the SAP landscape in the USA region to get better control of the application

- Reduced the processing time of background jobs

- Unified platform to take effective and strategic decisions based on real-time data

- Improvement in response times to solve queries

Conclusion

Classical migration using parallel export/ import has significantly reduced downtime in case we don’t have a direct connection between on-premise and AWS. Automatic Scripts helped avoid manually copying export files from the source system to the AWS S3 bucket and from the AWS S3 bucket to target the SAP application server. Correct configuration of R3loads based on system resources will optimize the export and Import throughput.